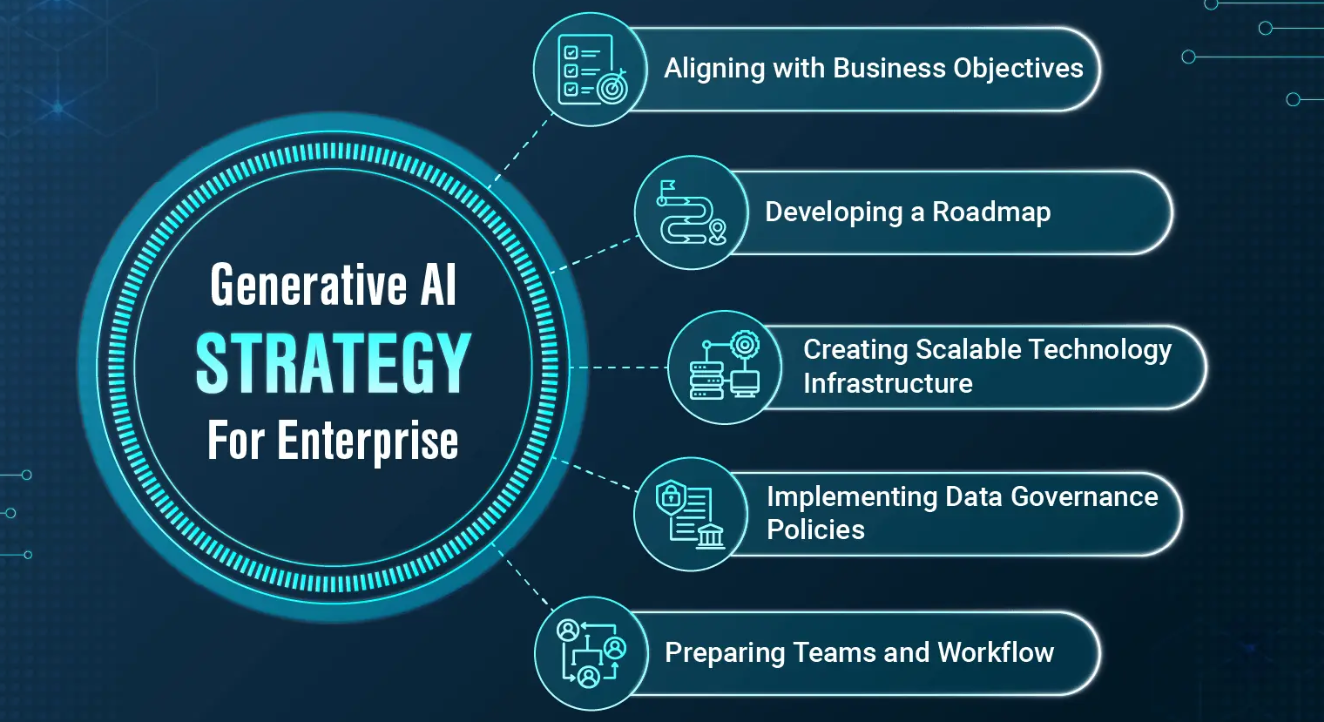

Enterprise AI Strategy

Defining and Owning a Multi-Year AI Journey Aligned to Corporate Priorities

The Challenge: Many organizations invest heavily in AI and generative AI pilots, yet struggle to translate experimentation into sustained, enterprise-wide value. Common pitfalls include:

- Misalignment between AI initiatives and core business objectives

- Fragmented efforts across operating companies or business units

- Lack of clear prioritization, leading to scattered resources and “pilot purgatory”

- Insufficient data readiness, talent, governance, and infrastructure

- Weak executive sponsorship and cultural resistance to change

Without a cohesive, multi-year strategy, AI investments deliver inconsistent ROI, create governance gaps, and fail to scale effectively across the enterprise.

The Challenge: Most organizations struggle with fragmented, poor-quality, or inaccessible data that prevents effective AI and analytics adoption. Common issues include:

- Disconnected data silos across legacy systems, cloud applications, and operating companies

- Inconsistent data quality, duplication, and lack of trust in insights

- Slow or brittle ETL processes that cannot scale with growing data volumes

- Inadequate infrastructure for real-time or batch AI/ML workloads

- Weak governance, security, and compliance controls

- High maintenance costs and dependency on specialized talent

Without a solid data engineering foundation, even the best AI strategies and models fail to deliver sustained business value.

Our Approach as Your AI/GenAI Consulting Partner

At [Your Firm Name], our Data Engineering service is designed to build a future-proof, AI-ready data capability tailored to your enterprise needs. We act as your strategic implementation partner assessing maturity, designing modern architectures, executing robust pipelines, and transferring knowledge so your teams can own and evolve the platform long-term.

Engagements typically range from 3–9 months depending on scope, with options for ongoing managed support or quarterly optimization sprints.

What Is Involved: Our Phased Methodology

Phase 1: Data Landscape Assessment & Requirements Gathering (3–5 weeks)

- Conduct comprehensive audits of existing data sources, flows, quality, and pain points across business units and operating companies

- Interview stakeholders to align on business and AI use case requirements (volume, velocity, variety, and veracity)

- Evaluate current tools, cloud readiness, integration challenges, and compliance needs

- Identify quick wins for immediate data quality or access improvements

Key Deliverables: Current-state architecture diagram, data maturity assessment, and prioritized requirements backlog.

Phase 2: Target Architecture Design (4–6 weeks)

- Design a modern, scalable data architecture (Medallion architecture, lakehouse, or hybrid) using best-in-class patterns

- Recommend optimal technology stack: cloud platforms (AWS, Azure, GCP), data lakes (S3, ADLS, GCS), warehouses (Snowflake, Databricks, BigQuery), orchestration tools (Airflow, dbt, Prefect), and streaming (Kafka, Flink)

- Define data modeling standards, schema evolution, and partitioning strategies optimized for AI/ML workloads

- Incorporate real-time, batch, and event-driven patterns as needed

Key Deliverables: Future-state reference architecture, technology recommendations, and cost/benefit analysis.

Phase 3: Data Pipeline Development & Implementation (8–16 weeks)

- Build robust ingestion, transformation, and orchestration pipelines (ETL/ELT) using modern frameworks

- Implement data quality rules, validation, cleansing, and enrichment processes

- Develop incremental loading, change data capture (CDC), and error-handling mechanisms

- Create unified data models and semantic layers for consumption by analytics and AI teams

- Enable self-service data access through catalogs and governed sandboxes

Key Activities: Agile development with iterative releases, automated testing, and performance tuning.

Phase 4: Governance, Security & Quality Framework

- Establish enterprise data governance policies, stewardship roles, and ownership models

- Implement data cataloging, lineage, and metadata management

- Enforce security controls (encryption, access policies, masking) and compliance (GDPR, CCPA, industry-specific)

- Set up monitoring, alerting, and SLA dashboards for pipeline reliability

Phase 5: Testing, Deployment & Knowledge Transfer (4–6 weeks)

- Conduct rigorous testing for accuracy, performance, scalability, and resilience

- Deploy to production environments with CI/CD and MLOps integration

- Deliver hands-on training, documentation, and runbooks for your internal teams

- Establish ongoing support and optimization processes

Key Deliverables

- Modern, scalable data platform and pipelines supporting both analytics and AI workloads

- Comprehensive data governance, quality, and catalog framework

- Detailed architecture diagrams, pipeline documentation, and operational runbooks

- Data maturity improvement roadmap and talent upskilling plan

- Production-ready, monitored data infrastructure with SLAs

- Executive and technical handover sessions with knowledge transfer

Benefits for Your Organization

- Trusted Data Foundation — High-quality, accessible data that powers reliable AI and analytics outcomes

- Accelerated AI Initiatives — Faster time-to-value for machine learning and generative AI projects

- Significant Cost Savings — Reduced manual data wrangling and infrastructure maintenance

- Improved Decision Making — Real-time or near-real-time insights across the enterprise

- Scalability & Flexibility — Architecture that grows with data volume and new use cases

- Reduced Risk — Strong governance, security, and compliance built by design

Typical results include 50–70% faster data delivery for new use cases, dramatic improvements in data quality scores, and a foundation that enables multiple AI solutions to launch successfully within months rather than years.

Zenith AI Company

Zenith AI Company